May 29, 2026

News

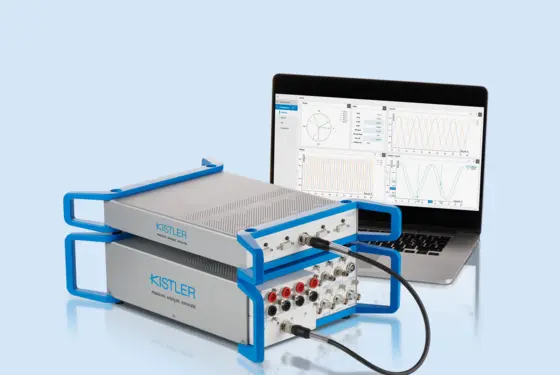

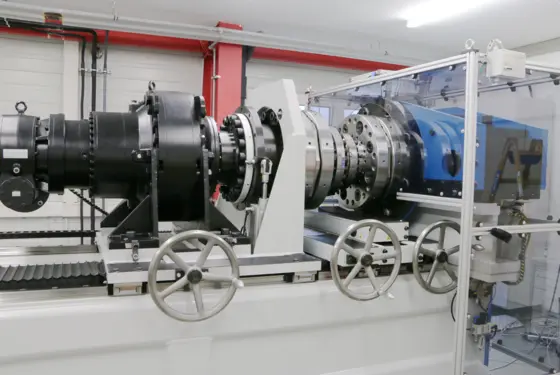

Kistler integrates force sensors into the tool holder of electromechanical joining systems

Electromechanical NCFx Pro joining systems now available in two sensor technologies for greater flexibility in the force measurement range